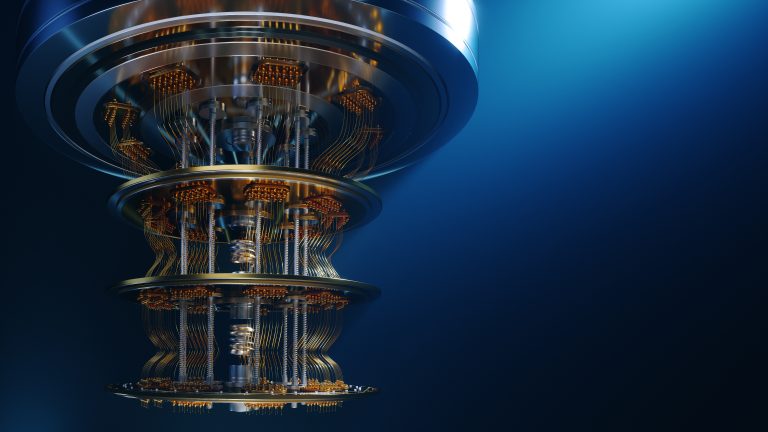

Our brains are the most complex and powerful information processing systems in existence. They allow us to learn, adapt, and solve problems in ways that even the most advanced computers struggle to replicate. But what if we could build machines that mimic the brain’s architecture and function? This is the ambitious goal of neuromorphic computing, a rapidly evolving field that promises to revolutionize artificial intelligence (AI).

Beyond the Limits of Silicon: Inspiration from the Biological

Traditional computers rely on silicon chips to process information. While incredibly powerful, these chips operate in a fundamentally different way than our brains. Brains use networks of interconnected neurons that communicate through electrical impulses. Neuromorphic computing aims to bridge this gap by creating hardware and software inspired by the brain’s structure and function.

Instead of silicon chips, neuromorphic systems utilize specialized hardware that mimics the behavior of neurons. These “artificial neurons” are connected in intricate networks, similar to the neural pathways in the brain. This allows them to process information in a parallel and distributed fashion, much like our brains do.

Unlocking Unprecedented AI Power: The Potential Benefits

The potential benefits of neuromorphic computing are vast:

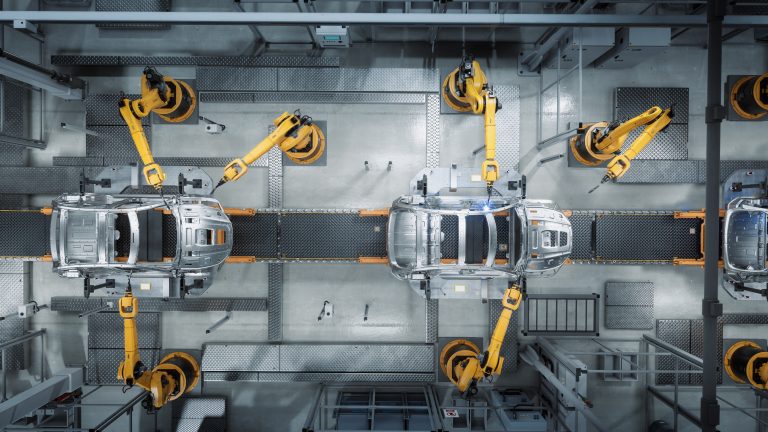

- Enhanced Learning: Neuromorphic systems could learn and adapt to new information much faster than traditional AI, leading to breakthroughs in areas like machine translation and autonomous vehicles.

- Pattern Recognition: Mimicking the brain’s ability to recognize patterns could revolutionize fields like medical diagnosis and anomaly detection in financial markets.

- Low Power Consumption: The brain operates incredibly efficiently on a fraction of the energy used by modern computers. Neuromorphic systems could pave the way for low-power AI devices with extended battery life.

- Unveiling the Brain’s Secrets: By building brain-like systems, we might gain a deeper understanding of how our own brains work, leading to advancements in neuroscience and brain research.

Challenges and the Road Ahead: Building a Brain-in-a-Box

Despite the promise, significant challenges remain. Replicating the full complexity of the brain is a daunting task. Additionally, developing efficient algorithms that can leverage the unique capabilities of neuromorphic hardware is an ongoing research effort.

However, the field is rapidly advancing. Companies and research institutions are investing heavily in neuromorphic computing, and early prototypes are already demonstrating impressive capabilities.

The Future of AI: A New Era of Intelligence

Neuromorphic computing isn’t about replacing traditional AI; it’s about creating a new paradigm for artificial intelligence. By harnessing the power of the brain, we can unlock new levels of intelligence and problem-solving abilities in machines. The future of AI is likely to be a hybrid of traditional and neuromorphic approaches, working together to solve some of humanity’s most pressing challenges. The journey to build a “brain-in-a-box” may be long, but the potential rewards are immense. Neuromorphic computing offers a glimpse into a future where machines can not only think but also think like us.